Grab Deal : Flat 30% off on live classes + 2 free self-paced courses - SCHEDULE CALL

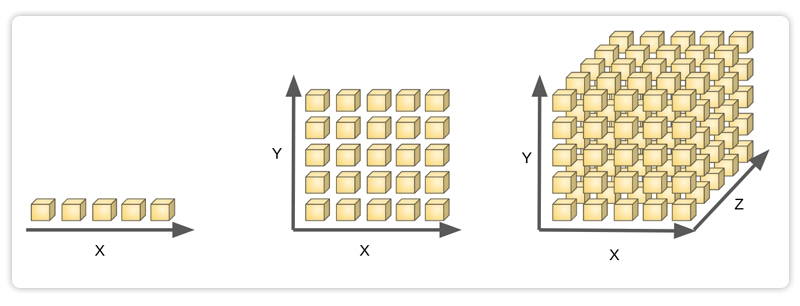

High dimensional clustering returns groups of objects that cluster. Similar object types should be grouped to perform a high-dimensional cluster analysis, but the high-dimensional data space is enormous and has complex data types and properties. A big challenge in high dimensional clustering is that we need to discover the set of attributes present in each cluster. The cluster can be recognized and described using its characteristics. We need to look for clusters and look around for any that may already be there to cluster high-dimensional data. High-dimensional data is reduced to low-dimensional data to simplify clustering and finding. Some applications require appropriate cluster models, especially for multidimensional data. Understanding clustering high dimensional data in data mining begins with understanding data science; you can get an insight into the same through our Data Science training.

High dimensional clustering returns groups of objects that cluster. Similar object types should be grouped to perform a high-dimensional cluster analysis, but the high-dimensional data space is enormous and has complex data types and properties. A big challenge in high dimensional clustering is that we need to discover the set of attributes present in each cluster. The cluster can be recognized and described using its characteristics. We need to look for clusters and look around for any that may already be there to cluster high-dimensional data. High-dimensional data is reduced to low-dimensional data to simplify clustering and finding. Some applications require appropriate cluster models, especially for multidimensional data. The method of extracting references from datasets of input data without labeled responses is known as "unsupervised learning." In general, clustering is an unsupervised learning strategy. The objective of clustering is to divide the population or set of data points into several groups so that the data points within each group are more similar and different from those within the other groups.

Clusters in high-dimensional data are significantly small. Conventional distance measurements may need to be more effective. Instead, to find hidden clusters in high-dimensional clustering, we need to use cutting-edge methods to simulate correlations between objects in subspace.Data is divided into groups (clusters) by clustering to make it simpler or easier to understand. For example, clustering has been widely used to identify genes and proteins with related functions, to group relevant materials for browsing, or as a method to compress data. Although there is a long history of clustering and numerous clustering techniques have been created in statistics, pattern recognition, data mining, and other areas, many challenges remain to be overcome.

Approaches to high-dimensional clustering in axis-parallel or arbitrarily oriented affine subspaces differ in their interpretation of the overall goal, which is to find clusters in high-dimensional data. An alternative approach is to find clusters based on patterns in the data matrix, a technique commonly used in bioinformatics known as "biclustering."

Five clustering techniques and approaches exist:

1. Subspace Search Methods: A subspace search method looks for clusters in the subspaces. In this context, a cluster is a collection of objects with related kinds. How similar the clusters are determined using distance or density features. A subspace clustering technique is the CLIQUE algorithm. Subspace search techniques are used to look at some subspaces. In subspace search techniques, there are two methods:

2. Correlation-Based Method: This type of clustering builds advanced correlation models to find hidden clusters. Correlation-based models are preferable if using subspace search algorithms to cluster the objects is not a possibility. Advanced mining approaches for correlation cluster analysis are included in correlation-based clustering. Biclustering strategies cluster both the characteristics and the entities using correlation-based clustering.

3. Biclustering Method: Biclustering is grouping data based on these two variables. In some situations, we can cluster both objects and attributes simultaneously. Biclusters are the end product clusters.

4. Hybrid Method: Many algorithms settle for a result in the middle, where many perhaps overlap, but not necessarily a comprehensive set of clusters are produced. This is because not all algorithms attempt to either identify a unique cluster assignment for each point or all clusters in all subspaces. For example, the FIRES method employs a too-aggressive technique to realistically produce all subspace clusters despite being fundamentally a subspace clustering algorithm. An additional hybrid strategy is to include a human in the algorithmic loop: Through the use of heuristic sample selection techniques, human domain experience can assist in reducing an exponential search space. This is helpful in the medical field where, for instance, clinicians are presented with high-dimensional descriptions of patient situations and measurements of the effectiveness of particular therapies.

5. Projected Clustering Method: Projected clustering attempts to assign each point to a distinct cluster; however, clusters can exist in several subspaces. The primary approach combines a specific distance function with a standard clustering technique. The PreDeCon algorithm, for example, examines whether attributes appear to promote clustering for each point and modifies the distance function so that dimensions with low variance are amplified in the distance function. PROCLUS employs a similar strategy using k-medoid clustering. The initial medoids are guessed, and each medoid's subspace spanned by qualities with low variance is identified. Points are granted to the closest medoid, with the distance determined only by the medoid's subspace. The program then proceeds in the same manner as the standard PAM algorithm.

Clustering high dimensional data suffers from both algorithmic restrictions and the Curse of Dimensionality, which frequently results in a discrepancy between the visual perception of the tSNE plot and the "curse of dimensionality," which is the effect of increased complexity on distance or similarity. Most clustering approaches, in particular, rely heavily on the measure of distance or similarity and require that objects within clusters be, in general, closer to each other than objects in different clusters. Plotting the histogram is one method for determining whether a data set contains clusters. If the data contains clusters, the graph usually has two peaks: one reflecting the distance between points in clusters and one representing the average distance between points. In datasets, If there is only one peak or the two peaks are close together, clustering by distance-based approaches will be challenging. Let's dive more into the topic of clustering high dimensional data in data mining and learn more about its importance in data science and key takeaways. You should check out the data science tutorial guide to brush up on your basic concepts.

What are The Assumptions and Limitations of The Clustering Method?

Even though the Curse of Dimensionality is the most significant barrier to scRNAseq cluster analysis, many clustering methods may perform poorly, even in low dimensions, due to inherent assumptions and restrictions. All clustering algorithms can be loosely classified into four categories:

Four problems need to be solved for clustering high-dimensional data:

Data Science Training For Administrators & Developers

In this article, we learned that clustering high dimensional data is complex due to the curse of dimensionality and the limitations of clustering approaches. Unfortunately, most clustering techniques require the number of groups to be determined a priori, which makes optimization difficult due to the inapplicability of cross-validation for clustering. However, the HDBSCAN method has only one hyperparameter that may be easily optimized by minimizing the number of unassigned cells. You can also learn about neural network guides and python for data science if you are interested in further career prospects in data science.

FAQs

1. Which Clustering Method is The Most Strong for High-Dimensional Data?

Graph-based clustering (Spectral, SNN-cliq, and Seurat) is likely the most robust for high-dimensional clustering of datasets since it uses graph distance. Distance on a graph is used in graph-based clustering.

2. Is k-Means++ Suitable for Clustering High-Dimensional Data?

The clustering of high dimensional datasets is performed for many iterations, the number of which is significantly decreased compared to other existing approaches. K-Means++ can optimally allocate data to clusters in the initial few iterations without impacting the quality of clustering itself.

3. How Can You know if Clustering is Representative?

The objective of an unsupervised algorithm is less obvious to identify than the purpose of a supervised algorithm, which has a straightforward task to fulfill (E.g., classification or regression). As a result, the model's success is more subjective. The fact that the job is more complex to define does not preclude a wide range of performance indicators from being used.

4. What are Some of The Most Common Distance Examples?

The most typical distance examples are the Euclidean distance and the Manhattan distance. The "ordinary" straight-line distance between two places in Euclidean space is the Euclidean distance. The Manhattan distance is named after the distance traveled by taxi on Manhattan's streets, which are parallel or perpendicular to each other in two dimensions.

5. What Effect do Data Points Have on Clustering?

In high dimensions, data points occupy the surface and deplete the core of the n-ball, the image source. As a result, the mean distance between data points diverges and loses meaning, leading to the divergence of the Euclidean distance, the most commonly used distance for grouping.

If you want to go deeper and learn the fundamentals of data science, I recommend enrolling in Janbask Training's courses for the best data science certification courses. They have highly trained professionals and an excellent curriculum.

Basic Statistical Descriptions of Data in Data Mining

Rule-Based Classification in Data Mining

Cyber Security

QA

Salesforce

Business Analyst

MS SQL Server

Data Science

DevOps

Hadoop

Python

Artificial Intelligence

Machine Learning

Tableau

Download Syllabus

Get Complete Course Syllabus

Enroll For Demo Class

It will take less than a minute

Tutorials

Interviews

You must be logged in to post a comment