Month End Offer : Get 30% OFF + $999 Study Material FREE - SCHEDULE CALL

Month End Offer : Get 30% OFF + $999 Study Material FREE - SCHEDULE CALL

Month End Offer : Get 30% OFF + $999 Study Material FREE - SCHEDULE CALL

Month End Offer : Get 30% OFF + $999 Study Material FREE - SCHEDULE CALL

Want to ace your DBMS interview? Here are some of the most popularly asked questions of DBMS interview. You can take a thorough look at this list and prepare well for your DBMS interview.

This list elucidates questions asked to Freshers and experienced professionals separately. You can take a look as per your requirement. However, we would suggest you read it in entirety because it will help you in understanding a lot about DBMS.

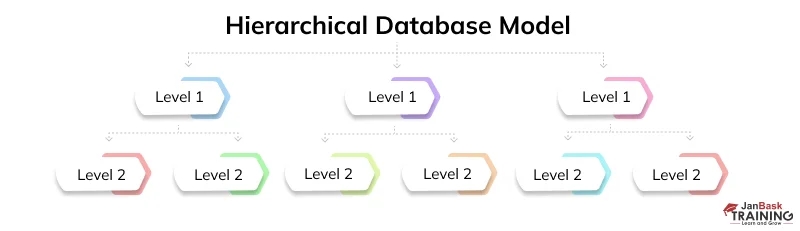

In various leveled database model, information is sorted out into hubs in a tree-like structure. A hub is associated with just one parent hub above it. Thus information in this model has a one-to-numerous relationship. A case of this model is the Document Object Model (DOM) regularly utilized in internet browsers.

A typical Network database model is a refined adaptation of the various leveled model. Here as well, the information is sorted out in a tree-like structure. In any case, one youngster hub can be associated with numerous parent hubs. This offers to ascend to many-to-numerous connections between information hubs. IDMS (Integrated Database Management System), Integrated Data Store (IDS) are instances of Network Databases.

A usual social database is sorted out into tables, records, and segment and there is a well-characterized connection between database tables. A social database, the executive's framework (RDBMS) is an application that enables you to make, update, and regulate a social database. Tables convey and share the data which empowers information search, information association, and detailing. An RDBMS is a subset of a DBMS.

In an object-oriented database model, information is spoken to by items. For instance, sight and sound document or record in a social database are put away as an information object instead of an alphanumeric worth.

SQL (Structured Query Language) is a programming language used to speak with information put away in databases. SQL language is generally simple to compose, read, and decipher.

A database file is an information structure that improves the speed of information recovery activities on a database. The strategy of boosting the accumulation of records is named as Index chasing. It is finished by utilizing techniques like inquiry advancement and query appropriation.

A distributed or disseminated database is an accumulation of numerous interconnected databases that are spread physically crosswise over different areas. The databases can be on a similar system or numerous systems. A DDBMS (Distributed – DBMS) incorporates information coherently so to the client it shows up as one single database.

Database partitioning is where a consistent database is isolated into unmistakable autonomous parts. The database items like tables, files are subdivided and oversaw and got to at the granular level.

Partitioning is incredible usefulness that builds execution with a diminished expense. It upgrades sensibility and improves the accessibility of information.

In a static SQL, SQL articulations are inserted or hard-coded in the application, and they don't change at runtime. How information is to be gotten to is foreordained subsequently it is progressively quick and effective. The SQL proclamations are incorporated at aggregate time.

In a dynamic SQL, SQL explanations are built at runtime; for instance, the application can enable the client to make the inquiries. Essentially, you can manufacture your inquiry at runtime. It is nearly more slow than the static SQL as the inquiry is incorporated at runtime.

Data Warehousing is a system that totals a lot of information from at least one source. Information investigation is performed on the information to settle on vital business choices for associations.

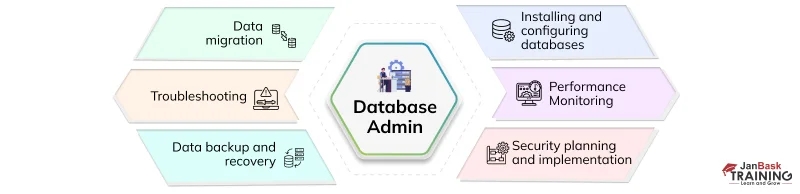

The Database Administrator (DBA) assumes some significant jobs in an association. They are as per the following:

An element relationship model or a substance relationship graph is a visual portrayal of information which is spoken to like elements, properties, and connections are set between elements.

An element can be a certifiable article, which can be effectively recognizable. For instance, in a library database, books, distributors, and individuals can be considered as elements. Every one of these substances has a few qualities or properties that give them their character. In an ER model, the elements are identified with one another.

Data mining is a procedure for dealing with a lot of information to recognize examples and patterns. It utilizes complex scientific and measurable calculations to portion information for the forecast of likely results. There are numerous apparatuses for Data Mining like RapidMiner, Orange, Weka, etc.

Query enhancement is a significant element with regards to the presentation of a database. Distinguishing an effective execution plan for assessing and executing a question that has the least evaluated expense and time is alluded to as inquiry improvement.

A usual catalog is a table that contains the data, for example, the structure of each document, the sort, and capacity organization of every datum thing and different imperatives on the information. The data put away on the list is called Metadata.

Subqueries, or settled inquiries, are accustomed to bringing back a lot of lines to be utilized by the parent inquiry. Contingent upon how the subquery is composed, it very well may be executed once for the parent question, or it tends to be executed once for each column returned by the parent inquiry. On the off chance that the subquery is executed for each line of the parent, this is known as an associated subquery.

An associated subquery can be effectively recognized on the off chance that it contains any references to the parent subquery segments in its WHERE statement. Segments from the subquery can't be referenced anyplace else in the parent question. The accompanying model exhibits a non-connected subquery.

Expansion, erasure, and change are the most significant crude activities normal to all DBMS.

In SQL (Structured Query Language), the term cardinality alludes to the uniqueness of information esteems contained in a specific section (trait) of a database table. The lower the cardinality, the more are the copied qualities in a segment.

SQL Server is an RDBMS created by Microsoft. It is entirely steady and strong consequently well known. The most recent adaptation of SQL Server is SQL Server 2017.

Indexes can be made to uphold uniqueness, to encourage arranging, and empower quick recovery by section esteems. At the point when a segment is much of the time utilized. It is a decent possibility for a file to be utilized with reasonable conditions in the WHERE statements.

Hashing is the change of a series of characters into a normally shorter fixed-length worth or key that speaks to the first string. Hashing is utilized to record and recover things in a database since it is quicker to discover the thing utilizing the shorter hashed key than to discover it utilizing the first esteem.

DBMS is a structure that oversees and handles huge volumes of information put away in the database. It fills in as a halfway among clients and the database. Following are not many focal points of database the executive’s framework -

Following is a rundown of the contrasts among NoSQL and RDBMS: –

Index hunting is seen as a significant piece of database the executive's framework. It improves the speed and the question execution of the database. It is done in the following ways-

It is basic that exchanges are kept as short as would be prudent. It ought to be short so as to diminish dispute for assets. Coming up next are not many rules for coding exchanges proficiently –

Atomicity: In the database the board, atomicity is an idea that guarantees the clients of the inadequate exchanges. It deals with these exchanges and the activities identified with deficient exchanges are left fixed in DBMS.

Aggregation: It totals the gathered elements and their connections. In this, data is assembled and communicated in synopsis structure

Document preparing framework is conflicting and shaky. There are likewise odds of information excess and duplication. Some of the time, it gets hard to get information and simultaneous access is additionally not upheld. There is an extent of information detachment and honesty.

Data independence reflects data transparency and specifies that the application is independent of the storage structure and access strategy of data. It modifies the schema definition in one level without changing the schema level in the next level. Physical data independence and logical data independence are two types of data independence.

Data independence reflects information straightforwardness and determines that the application is autonomous of capacity structure and access methodology of information. It adjusts the composition definition at one level without changing the mapping level at the following level. Physical information autonomy and coherent Data independence are two sorts of information autonomy.

Well, this is all we have in store for today. All these questions are very frequently asked in all the DBMS interviews that happen in major companies. Go through them very thoroughly and try to understand all the answers. Do not just mug them up. If you are stuck somewhere, do let us know we will help you.

SQL Server MERGE Statement: Question and Answer

Top SSRS Interview Questions And Answers

Most Frequently Asked RDBMS Interview Questions And Answers

Cyber Security

QA

Salesforce

Business Analyst

MS SQL Server

Data Science

DevOps

Hadoop

Python

Artificial Intelligence

Machine Learning

Tableau

Download Syllabus

Get Complete Course Syllabus

Enroll For Demo Class

It will take less than a minute

Tutorials

Interviews

You must be logged in to post a comment