25

JulInternational Womens Day : Flat 30% off on live classes + 2 free self-paced courses - SCHEDULE CALL

Naive Bayes is one of the simplest methods to design a classifier. It is a probabilistic algorithm used in machine learning for designing classification models that use Bayes Theorem as their core. Its use is quite widespread especially in the domain of Natural language processing, document classification and allied.

In this blog, we’ll learn:

The term naïve in the name tells one of the basic assumptions in these classifiers that the input features are completely independent of each other. In other words, there will be no implicit change in the input features when one or more than one input parameter is changed, explicitly.

Naïve Bayes is an extremely popular algorithm owing to its probabilistic nature which provides it a significant advantage like fast-paced predictions, easy codes over other algorithms. This makes this model highly scalable.

Naïve Bayes classifier uses conditional probability and number probability distributions to train the machine. Thus, it becomes important to know the following points:

In the domain of probability theory, conditional probability is the measure of an event A occurring when another event say B has taken place. This is represented by and is read as “the conditional probability of A given B”.

One of the classical example in the domain of conditional probability is flipping the coin. SO, while flipping a fair coin, the chances of having a head or tail are equal. IN other words, the probability of any of the events is 0.5. So, conditional probability talks about the chances of having a head once we already had a tail. In this case, theoretically, it remains at 0.5 as well. Bayes theorem provides a mathematical model for calculating these.

It is also sometimes called as the god’s theorem. It describes the probability of occurrence of an event, based upon the existing knowledge about the conditions that are related to that specific event. Say, diabetes happens at some particular age X, and then by using the Bayes theorem, the age of a person can be used to forecast the chances that they will have diabetes and the results will be much better as compared to a situation when we had no idea about their age.

Mathematically, Bayes’ theorem is given by the following equation:

Read: How To Make Python Developer Resumes For Professional & Freshers: Comprehensive Guide With Samples

The Naïve Bayes:

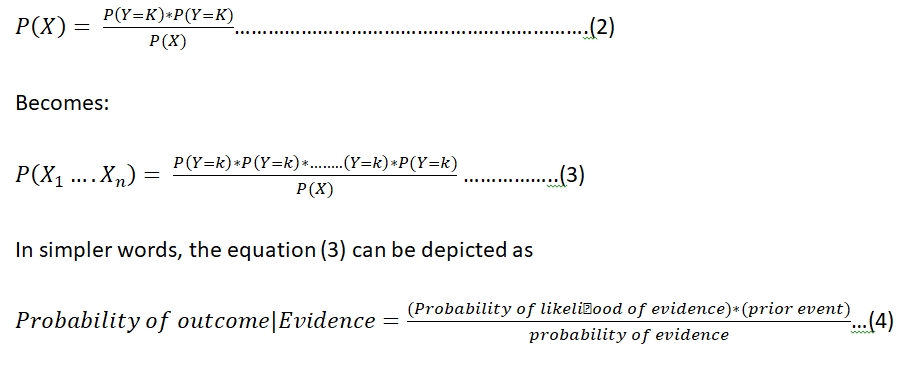

The Bayes theorem as depicted in figure (1), shows that it can reflect the reverse of an event. Just the event shown should be known. When the Bayes rule in applied to a set of events that are completely independent, the resulting model is called naïve Bayes.

A and B in the standard formula can be replaced with X and Y where X is the independent variable and Y is the dependent variable.

Now for non-linear cases of X where more than one instance of the same exists, the instance can be reduced to a linear instance by simply each instance to be a separate instance and applying the Bayes rule:

As a comparison of equation (3) and (4) depicts, it can be inferred from these that the likelihoods of all the X’s can be multiplied and is called the probability of likelihood of evidence. This can be known from the training dataset by filtering records where .

The multiplicative term with the probability of likelihood of evidence is called the prior which depicts the overall probability of Y= q, where q is the class label of Y.

As can be observed from the above equations, the Naïve Bayes theorem only depicts the probability. Hence, only in its nascent capacity, it cannot become a classifier. Thus, it is always used in combination with a probability distribution for designing the classifier. Most commonly used naïve Bayes based classifier is the following:

Read: Upgrade Your Programming Career by Learning Python & Getting Certified

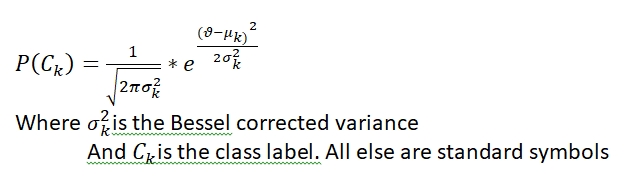

1). Gaussian naïve Bayes:

A Gaussian naïve Bayes uses Gaussian probability distribution for designing and is used to deal with continuous data. A Gaussian distribution is defined as:

2). Multinomial Naïve Bayes:

If the dataset set consists of probability in terms of frequency instead of continuous data Gaussian distribution cannot be applied. In this situation, Multinomial distribution is used.

3). Bernoulli Bayes:

In case the features are binary in nature, the Bernoulli naïve Bayes are utilized. It is very popular in case of documentation when the feature space is binary.

In this blog, for designing a naïve Bayes classifier, sklearn is utilized with python. A classical iris flower dataset is utilized. Details of the dataset can be found here.

First of all, libraries need to be imported. The commands for the same are:

from sklearn.datasets import load_iris from sklearn.model_selection import train_test_split from sklearn.naive_bayes import GaussianNB

Loading the dataset and splitting it into training and testing set

Read: Comprehensive Python Scripting Tutorial | Setup & Examples

X, y = load_iris(return_X_y=True) X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=0)

Training the model:

gnb = GaussianNB()

If one wishes to use any other model from the domain of Naïve Bayes based classifier. Then, that needs to be specified here.

Querying the query set and checking the results:

y_pred = gnb.fit(X_train, y_train).predict(X_test)

print("Number of mislabeled points out of a total %d points : %d" % (X_test.shape[0], (y_test != y_pred).sum()))

This query, in this case, will give the output as

Number of mislabeled points out of a total of 75 points: 4

Thus, out of all the test sets of 75, 4 were incorrect. Giving a final accuracy of almost 99%.

Pros:

Cons:

Uses of Naïve Bayes based classifier:

IN this blog, Naïve Bayes classifier was covered which belongs to the supervised learning domain of machine learning. Naïve Bayes based classifier is extremely competent when the condition of independence in the feature space is met. It can be used as per the level of understanding and requirement of the project where the condition of independence is met.

Read: Python vs Java : Which Programming Language is Best for Your Career?

Pinterest

Pinterest

Email

Email

The JanBask Training Team includes certified professionals and expert writers dedicated to helping learners navigate their career journeys in QA, Cybersecurity, Salesforce, and more. Each article is carefully researched and reviewed to ensure quality and relevance.

Cyber Security

QA

Salesforce

Business Analyst

MS SQL Server

Data Science

DevOps

Hadoop

Python

Artificial Intelligence

Machine Learning

Tableau

Search Posts

Related Posts

Upgrade Your Programming Career by Learning Python & Getting Certified

![]() 4.1k

4.1k

PCEP Certification Guide: Entry-Level Python Programmer Certification

![]() 2.2m

2.2m

17+ Best Python IDEs: Top Picks for Students & Professionals

![]() 258.5k

258.5k

How Much Does Python Software Engineer Make in Reality?

![]() 3k

3k

What is the Average Salary of a Python Developer in the USA?

![]() 5.8k

5.8k

Receive Latest Materials and Offers on Python Course

Interviews