25

JulInternational Womens Day : Flat 30% off on live classes + 2 free self-paced courses - SCHEDULE CALL

We have SQL Server database to store day to day transactions that happen through our application. There can be many such applications that store data in different databases. We have ETL tools which help us to extract data from these application databases and store them in a separate relational database called Data warehouse.

Reporting tools are there which help us to display the data elegantly stored in the data warehouse. But the purpose of data stored in a data warehouse is not only for creating reports. We should also be able to use these data for analytical purposes as well. The data stored in the data warehouse should be able to predict the future course of the organization if utilized properly. It should also be able to show you the present status of an organization. This is where SQL server analysis services download and use of leading SQL server analysis services 2017’s and other years innovations comes into play.

Microsoft SQL Server Analysis Services, SSAS, is an online analytical processing (OLAP) and information mining tool in Microsoft SQL Server. SSAS is utilized as an apparatus by associations to examine and understand data conceivably spread out over different databases, or in dissimilar tables or documents.

Over the next few paragraphs, we would try to give the user a glimpse of what all is available as SQL Server Analysis Service. We will discuss the available components and also show you practically with examples of how they can be implemented. And also teach a few steps on how to connect SQL server analysis services power BI.

Data Source defines the source of data that will be used for analysis in SSAS. SQL Server analysis services support data from multiple data sources. Below is a list of different databases that are supported by SSAS.

|

Source |

Version |

File Types |

|

MS Access |

2010 and later |

.accdb or .mdb |

|

SQL Server |

2008 and later |

NA |

|

Oracle |

9i and later |

NA |

|

Text file |

NA |

.txt,.tab,.csv |

|

Excel |

2010 and above |

.xlsx,.xlsm,.xlsb,.xltx,,.xltm |

In all our examples in this write-up, we will be using SQL Server database as our principal data source.

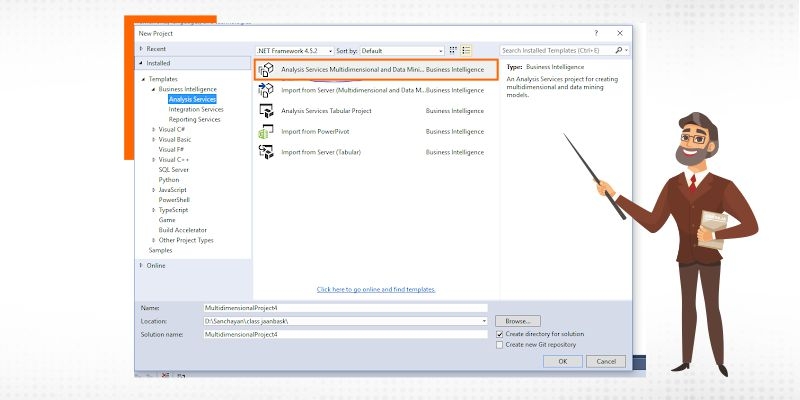

Step 1

Open an SSAS Project. The following screen will come up.

Step 2

Right-click on the data source tab and select new data source.

The Data source wizard window appears.

Click next.

Step 3

In the next screen, click on new to select a data source and a database name.

The final screen looks like below.

Click next.

Step 4

On the next screen you need to put your database credentials. If you are using a standalone machine you can select “use the service account” option. Otherwise, you need to put user id and password.

Click next.

Step 5

Click finish. You will find the data source is added to the project.

A Data Source View in SSAS is a set of tables or perspectives from the database that is required to design the cube. Analysis services can just access the tables or perspectives inside the Data Source View; anything outside the Data Source View isn't available. Some fundamental properties of SSAS Data source view are:

For instance, if the data warehouse contains 100 tables, and we need just 20 tables to structure the cube, at that point, there is no reason for including 100 tables. Rather than that, the data source view gives space to include those 20 tables.

SQL Server Training & Certification

Let us now add the data source view to the same project we have created earlier.

Step 1

Right-click on the Data Source View tab and select New Data Source View.

The Data Source View Wizard window appears.

Click on next

Step 2

The Select a Data Source window appears. You can select a new data source or you can continue with the same data source you added under the Data Sources tab.

Click on the Next button.

Step 3

The Select Tables and Views window appears. You can either select specific tables or you can select all the tables. Select the tables and click next.

Click on the Next button.

Step 4

The Completing the Wizard screen appears.

Click on the Finish button.

Step 5

The selected tables are added under Data Source Views tab.

It might happen that during the creation of the data source view, you might have added some unnecessary tables by mistake. It might also happen that you have overlooked adding some tables which were necessary for analysis purposes. If you have encountered such issues, there is always an option to add or remove the tables from the Data Source view. In the next few paragraphs, we will learn how to add or remove a table from the data source view.

SQL Server Training & Certification

Step 1

Double click on the Data Source View .dsv file under the data source view tab in the SSAS project.

This will open up the added tables and relations in the below-shown format.

Step 2

Right click anywhere on the blank space and select Add/Remove table option.

Step 3

The Add/Remove Tables window comes up. You can add or remove tables according to your requirements and click on Ok.

The tables will be added in or deleted from the main window.

|

Star Schema |

SnowFlake Schema |

|

The star schema consists of one or more fact tables referencing any number of dimension table |

The snowflake schema is represented by centralized fact tables which are connected to multiple dimensions |

|

In star schema, The fact tables and the dimension tables are contained. |

While in the snowflake schema, The fact tables, dimension tables as well as sub dimension tables are contained. |

|

Star schema is a top-down model. |

While it is a bottom-up model. |

|

It’s understanding is very simple. |

It’s understanding is difficult. |

Star Schema Example

Let us use the following database and table for the star schema example. Below the query to create the database and the corresponding tables are given. You can add data according to your choice.

Database Creation Query

CREATE DATABASE [Star Schema Database]

Fact Table Creation Query

CREATE TABLE [dbo].[Revenue](

[Dealer_id] [varchar](50) NOT NULL,

[Model_id] [varchar](50) NULL,

[Branch_id] [varchar](50) NULL,

[Date_ID] [varchar](50) NULL,

[Units_Sold] [varchar](50) NULL,

[Revenue] [varchar](50) NULL,

CONSTRAINT [PK_Revenue] PRIMARY KEY CLUSTERED

(

[Dealer_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Branch_Dim] FOREIGN KEY([Branch_id])

REFERENCES [dbo].[Branch_Dim] ([Branch_ID])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Branch_Dim]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Date_Dim] FOREIGN KEY([Date_ID])

REFERENCES [dbo].[Date_Dim] ([Date_ID])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Date_Dim]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Dealer] FOREIGN KEY([Dealer_id])

REFERENCES [dbo].[Dealer] ([Dealer_id])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Dealer]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Product] FOREIGN KEY([Model_id])

REFERENCES [dbo].[Product] ([Model_id])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Product]

GO

Dimension Table Creation Queries

Product

CREATE TABLE [dbo].[Product](

[Product_ID] [varchar](50) NOT NULL,

[Product_Name] [varchar](50) NULL,

[Model_id] [varchar](50) NOT NULL,

[Variant_id] [varchar](50) NULL,

CONSTRAINT [PK_Product_1] PRIMARY KEY CLUSTERED

(

[Model_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Dealer

CREATE TABLE [dbo].[Dealer](

[Dealer_id] [varchar](50) NOT NULL,

[Location_id] [varchar](50) NULL,

[Country_id] [varchar](50) NULL,

[Dealer_NM] [varchar](50) NULL,

[Dealer_CNTCT] [varchar](50) NULL,

CONSTRAINT [PK_Dealer] PRIMARY KEY CLUSTERED

(

[Dealer_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Date_Dim

CREATE TABLE [dbo].[Date_Dim](

[Date_ID] [varchar](50) NOT NULL,

[Year] [varchar](50) NULL,

[Month] [varchar](50) NULL,

[Quarter] [varchar](50) NULL,

[Date] [varchar](50) NULL,

CONSTRAINT [PK_Date_Dim] PRIMARY KEY CLUSTERED

(

[Date_ID] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Branch_Dim

CREATE TABLE [dbo].[Branch_Dim](

[Branch_ID] [varchar](50) NOT NULL,

[Name] [varchar](50) NULL,

[Address] [varchar](50) NULL,

[Country] [varchar](50) NULL,

CONSTRAINT [PK_Branch_Dim] PRIMARY KEY CLUSTERED

(

[Branch_ID] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

SQL Server Training & Certification

Once the schema is created the final output looks like below.

As you can see the final output looks like a star with the revenue fact table in the middle surrounded by the dimension tables.

Snowflake Schema Example

Let us use the following database and table for the snowflake schema example. Below the query to create the database and the corresponding tables are given. You can add data according to your choice.

Database Creation Query

CREATE DATABASE [Snowflake Schema Database]

Fact Table Creation Query

CREATE TABLE [dbo].[Revenue](

[Dealer_id] [varchar](50) NULL,

[Model_id] [varchar](50) NULL,

[Branch_id] [varchar](50) NULL,

[Date_id] [varchar](50) NULL,

[Units_sold] [varchar](50) NULL,

[Revenue] [varchar](50) NULL

) ON [PRIMARY]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Branch_dim] FOREIGN KEY([Branch_id])

REFERENCES [dbo].[Branch_dim] ([Branch_id])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Branch_dim]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Date_Dim] FOREIGN KEY([Date_id])

REFERENCES [dbo].[Date_Dim] ([Date_id])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Date_Dim]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Dealer] FOREIGN KEY([Dealer_id])

REFERENCES [dbo].[Dealer] ([Dealer_id])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Dealer]

GO

ALTER TABLE [dbo].[Revenue] WITH CHECK ADD CONSTRAINT [FK_Revenue_Product] FOREIGN KEY([Model_id])

REFERENCES [dbo].[Product] ([Model_id])

GO

ALTER TABLE [dbo].[Revenue] CHECK CONSTRAINT [FK_Revenue_Product]

GO

Dimension Table Creation Queries

Product

CREATE TABLE [dbo].[Product](

[Product_ID] [varchar](50) NOT NULL,

[Product_Name] [varchar](50) NULL,

[Model_id] [varchar](50) NOT NULL,

[Variant_id] [varchar](50) NULL,

CONSTRAINT [PK_Product_1] PRIMARY KEY CLUSTERED

(

[Model_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Branch

CREATE TABLE [dbo].[Branch_dim](

[Branch_id] [varchar](50) NOT NULL,

[Name] [varchar](50) NULL,

[Address] [varchar](50) NULL,

[Country] [varchar](50) NULL,

CONSTRAINT [PK_Branch_dim] PRIMARY KEY CLUSTERED

(

[Branch_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Date_Dim

CREATE TABLE [dbo].[Date_Dim](

[Date_id] [varchar](50) NOT NULL,

[Year] [varchar](50) NULL,

[Month] [varchar](50) NULL,

[Quarter] [varchar](50) NULL,

[Date] [varchar](50) NULL,

CONSTRAINT [PK_Date_Dim] PRIMARY KEY CLUSTERED

(

[Date_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Dealer

CREATE TABLE [dbo].[Dealer](

[Dealer_id] [varchar](50) NOT NULL,

[Location_id] [varchar](50) NULL,

[Country_id] [varchar](50) NULL,

[Dealer_NM] [varchar](50) NULL,

[Dealer_CNCT] [varchar](50) NULL,

CONSTRAINT [PK_Dealer] PRIMARY KEY CLUSTERED

(

[Dealer_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

ALTER TABLE [dbo].[Dealer] WITH CHECK ADD CONSTRAINT [FK_Dealer_Country] FOREIGN KEY([Country_id])

REFERENCES [dbo].[Country] ([Country_id])

GO

ALTER TABLE [dbo].[Dealer] CHECK CONSTRAINT [FK_Dealer_Country]

GO

ALTER TABLE [dbo].[Dealer] WITH CHECK ADD CONSTRAINT [FK_Dealer_Dealer] FOREIGN KEY([Dealer_id])

REFERENCES [dbo].[Dealer] ([Dealer_id])

GO

ALTER TABLE [dbo].[Dealer] CHECK CONSTRAINT [FK_Dealer_Dealer]

GO

ALTER TABLE [dbo].[Dealer] WITH CHECK ADD CONSTRAINT [FK_Dealer_Location] FOREIGN KEY([Location_id])

REFERENCES [dbo].[Location] ([Location_id])

GO

ALTER TABLE [dbo].[Dealer] CHECK CONSTRAINT [FK_Dealer_Location]

GO

Location

CREATE TABLE [dbo].[Location](

[Location_id] [varchar](50) NOT NULL,

[Region] [varchar](50) NULL,

CONSTRAINT [PK_Location] PRIMARY KEY CLUSTERED

(

[Location_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

Country

CREATE TABLE [dbo].[Country](

[Country_id] [varchar](50) NOT NULL,

[Country_name] [varchar](50) NULL,

CONSTRAINT [PK_Country] PRIMARY KEY CLUSTERED

(

[Country_id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

The final output looks like below. You can see that one of the dimension tables has been further normalized into two more tables, thus making the schema look like a snowflake.

Step 1

Right click on the dimension tab of any SSAS project and select New Dimensions.

Step 2

The dimension wizard window pops up. Click next.

Select creation method window appears. You can select any of the options provided. For this example we will be selecting and using the existing table.

Step 3

Next windows ask you to provide source information. Click next.

Step 4

Select the dimension attributes in the next window.

Click on next.

Step 5

Click finish on the next window.

The new dimension will be created like below.

Step 1

Right click on the cube tab of any SSAS project and select New Cube.

Step 2

The cube wizard window appears. Click on next.

Step 3

Select creation Method window appears. We will select the Use Existing Table option.

Click on next.

Step 4

In the Select Measure Group Tables window select the tables you want to put into the cube and click next.

Step 5

In the Select Measures window, click on next.

Step 6

Next screen asks you to select an existing dimension. Click on next.

Step 7

Next screen asks you to select new Dimensions. You can select all of them or can choose from the list.

Step 8

In the next window, click on finish.

Step 9

The final output looks like below and the cube is created.

With a power BI desktop, it is easy to access SSAS multidimensional models aka SSAS MD. These are the quick tips to SQL server analysis services power BI integration.

Learn SQL Server in the Easiest Way

Open power BI desktop, click on the home tab, select on “get data” > “more” > “database”.

There, lookout and select SQL server analysis services database and click on “connect”.

Further an SQL server analysis services database window will appear, there enter the server name, connection mode and click on “Ok”, on doing that, a successful connection of SQL server analysis services power BI will be established.

The above write up introduces you to all the aspects of SQL server analysis services (SSAS). But this is only the tip of an iceberg. To learn all these discussed topics and others such as SQL server analysis services download/installation procedures, SQL server analysis services power BI combining, SQL server analysis services 2017 innovations and more in-depth, enroll for our comprehensive SSAS training program to lead the landscape of analysis services requirement for leading data-driven organizations.

Pinterest

Pinterest

Email

Email

I love to learn new things and also like sharing my knowledge with others. As an experienced IT Professional I like to update myself constantly with new and upcoming technologies. The database management system is one of my favorite subjects which I constantly explore.

Cyber Security

QA

Salesforce

Business Analyst

MS SQL Server

Data Science

DevOps

Hadoop

Python

Artificial Intelligence

Machine Learning

Tableau

Search Posts

Related Posts

Receive Latest Materials and Offers on SQL Server Course

Interviews