Introduction

Big Data is no longer an elusive concept. It has become an essential component of today's business landscape, assisting organizations in gaining useful insights and making data-driven decisions. However, managing and analyzing such large amounts of data can be difficult. This is where Amazon Web Services (AWS) enters the picture. The AWS Big Data portfolio includes a set of powerful and scalable solutions for dealing with the difficulties of Big Data.

Big Data has transformed the way we analyse, understand, and use information. AWS Big Data solutions are a game changer when it comes to handling and analysing these massive volumes of data.

AWS is the world's most comprehensive and widely used cloud platform, providing over 200 fully featured services from data centres across the world. Whether you're new to big data or an experienced pro, AWS has tools to help you design and deploy big data applications rapidly and affordably.

So get ready to uncover hidden trends, identify new opportunities, and make better data driven decisions with AWS big data training courses. Let's get started!

An Overview of AWS

Amazon Web Services (AWS) is an advanced cloud services platform that provides a variety of services such as computing power, storage, and databases to assist organisations in scaling and growing. Its versatility, security, and cost-effectiveness make it the preferred solution for enterprises of all sizes. It offers a set of cloud-based services that combine to provide an on-demand computing platform.

AWS offers an extensive range of additional services for computing, storage, networking, databases, analytics, machine learning, and the Internet of Things (IoT). Companies that use AWS have access to a highly reliable, scalable, low-cost cloud infrastructure platform that supports hundreds of thousands of enterprises with 190 companies worldwide.

With so many integrated cloud services, AWS enables you to choose the tools and features that will be most beneficial to your business. You just pay for the services you utilise, lowering your operational costs and allowing you to run your infrastructure more efficiently.

What Do You Mean By Big Data?

Big Data refers to extraordinarily massive data collections that can be computationally processed to discover patterns, trends, and relationships, particularly with regard to human behaviour and interactions. We're talking about massive amounts of data that are simply too large to process or understand manually.

When dealing with vast amounts of data, you need equally massive tools and technology to store, organise, and analyse it all. This is where AWS comes into play. Their big data services give the infrastructure and tools to assist you in uncovering the insights hidden within massive datasets.

Big Data has the following major characteristics:

- Data volume: Petabytes and Exabyte are of data from a variety of sources.

- Velocity: Data is generated and collected at an astounding rate.

- Variety: Data can be structured, unstructured, text, audio, video, log files, and other formats.

Understanding Big Data and how to use it is critical for organisations today. The insights gained from analysing massive amounts of data about your customers, products, services, and processes can provide you a major competitive advantage. AWS offers a comprehensive collection of analytics tools and services to assist you in extracting commercial value from your data at any scale.

What is AWS Big Data?

AWS Big Data is a collection of Amazon Web services (AWS) large data processing and storage capabilities. These services are intended to assist organizations in efficiently processing, analyzing, and storing enormous amounts of data.

These services are highly scalable, cost-effective, and capable of performing complicated data processing tasks, making them a popular choice for enterprises seeking insights and competitive benefits from big data.

These services are classified as data storage, data processing, data analysis, and machine learning. Among the AWS Big Data services are Amazon EMR, Amazon Redshift, Amazon Kinesis, and Amazon QuickSight Service. Some services are mentioned here:-

- Amazon S3: Scalable object storage for analytics and data lakes.

- Amazon EMR: A managed Hadoop framework that enables the processing of massive amounts of data.

- Amazon Redshift: A fast, fully managed data warehouse that makes large data analysis simple.

- Amazon Kinesis: Data streaming and analytics in real time.

- Amazon Athena: A serverless query service for analysing S3 data.

- Amazon QuickSight: A rapid business analytics solution for creating visualisations, performing ad hoc analysis, and quickly gaining insights from data.

The services are completely managed, scalable, and cost effective. Big data analytics is now accessible to organisations of all sizes thanks to AWS Big Data.

Big Data Architecture on AWS

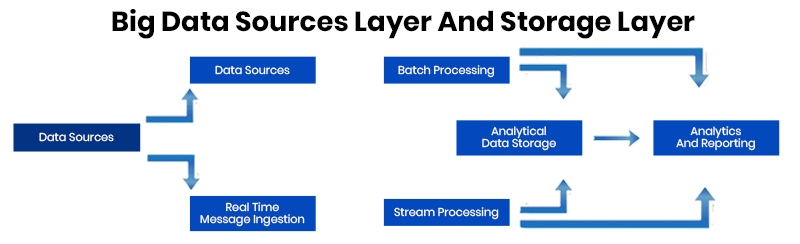

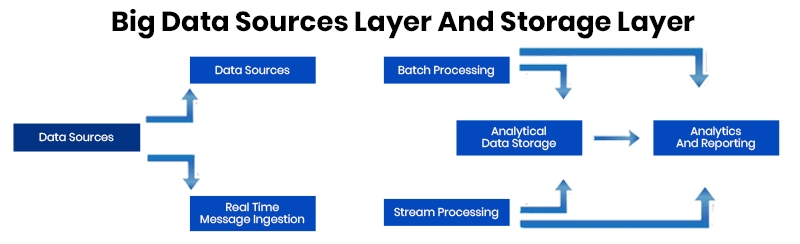

AWS Big Data provides a scalable and adaptable infrastructure for big data management. The architecture is comprised of a collection of services that collaborate to gather, store, process, and analyse data. The AWS Big Data architecture is divided into three layers:

Storage layer- The storage layer is in charge of storing the data. AWS Big Data provides data storage services such as Amazon S3 and Amazon Redshift.

Processing layer- The processing layer is in charge of analysing the stored data. AWS Big Data provides data processing services such as Amazon EMR and AWS Glue.

Analysis layer- The analysis layer is in charge of data analysis. AWS Big Data provides data analysis services such as Amazon Athena and Amazon QuickSight.

What Is The Work Process Of AWS Big Data Solutions?

To optimize the potential of your company's Big Data on AWS, follow this simple workflow:

- Collect and organise your data: Collect and store raw data from sources such as applications, devices, and websites using services such as Amazon S3.

- Process and analyse: Use tools such as Amazon EMR to filter, aggregate, and analyse your data in order to generate meaningful insights. Amazon EMR enables you to quickly create clusters to process massive volumes of data with frameworks such as Apache Spark and Hadoop.

- Visualise and report: Using services such as Amazon QuickSight, create dashboards, reports, and visualisations to discuss significant findings and trends with teams. Allow executives and analysts to go into data to uncover fresh insights.

- Implement insights: Put your data discoveries to use by making changes to optimise important company KPIs such as increasing customer conversion, lowering costs, enhancing efficiency, and so on. Continue collecting fresh data and optimising over time.

Through following these steps, you can tap into your company's power to handle, store, and analyse vast amounts of data rapidly and efficiently, and develop insights that can drive business value through acquiring data-driven insights, and building a culture where data influences crucial business decisions. AWS offers a broad collection of services to assist you with each phase of this Big Data workflow.

What AWS Services Are Utilized To Manage Big Data?

AWS offers a comprehensive set of Big Data management services. These AWS services are classified into five broad categories:

1. Data Storage: In this service Amazon S3 is used to store massive volumes of data, whereas Amazon Open Search Service operates clusters.

a. Amazon OpenSearch Service: A fully managed service that makes it simple to deploy, secure, and operate OpenSearch clusters at scale in the AWS cloud. It improves resiliency by employing backpressure and admission control, which monitors cluster resources and incoming traffic and rejects requests that could destabilise the cluster.

b. Amazon S3: Amazon S3 is an object storage service with industry-leading scalability, data availability, security, and performance. It is perfect for big data analytics, backup and restore, archiving, and many more applications.

2. Data Processing: AWS has numerous alternatives for data processing, including Amazon EMR for Hadoop and Spark big data processing, Amazon Athena for ad-hoc SQL queries, and Amazon Glue for ETL operations.

a. Amazon EMR: Amazon Elastic MapReduce (EMR) is a cloud-based big data platform that enables for the rapid and cost-effective processing of large amounts of data. It includes an Amazon EKS deployment option, as well as cost monitoring options for effective resource utilisation.

b. Amazon MSK: Amazon Managed Streaming for Apache Kafka (Amazon MSK) is a fully managed service that makes it simple to design and run applications that handle streaming data using Apache Kafka. It supports tiered storage, which allows you to customise the storage size based on your need.

c. AWS Glue: AWS Glue is a fully managed extract, transform, and load (ETL) service that simplifies the preparation and loading of data for analytics. Using the AWS Glue Schema Registry, it can process streaming data from Amazon MSK.

d. Amazon Athena: Amazon Athena is a query service that allows you to easily a nalyse data in Amazon S3 using conventional SQL. It does away with the need for sophisticated ETL operations, allowing organisations to query and analyse data using simple SQL terminology.

3. Data Warehousing: Amazon Redshift is a data warehouse that may be used for large-scale analytics tasks.

a. Amazon Redshift: Amazon Redshift is a data warehousing solution for the cloud that allows enterprises to store and analyse big datasets. It is meant to be extremely scalable, allowing organisations to add or subtract computing resources as needed.

4. Data Streaming: For IoT, logs, and other data sources, Amazon Kinesis delivers real-time data streaming and processing.

a. Amazon Kinetics: Amazon Kinesis is a real-time data streaming service that assists enterprises in collecting, processing, and analysing data in real time. It can analyse enormous amounts of data in real-time from many sources such as website clickstreams and social media feeds.

5. Machine Learning and Analytics and Visualisation: AWS offers numerous analytics and data visualisation services, such as Amazon QuickSight and Amazon Machine Learning.

a. Amazon QuickSight: Amazon QuickSight is a cloud-based business intelligence platform that enables organisations to visualise and share data insights. It allows users to generate interactive visualisations, reports, and dashboards with data from AWS services and third-party sources like Salesforce and Adobe Analytics.

b. Amazon CodeWhisperer: Amazon CodeWhisperer is a machine learning tool that assists developers in writing better code. It has been trained on billions of lines of code and is capable of generating code suggestions in real time. It also shows potential exploit chances and provides advice on how to improve your code.

These AWS services can be combined to create strong Big Data solutions capable of rapidly processing, storing, and analysing massive volumes of data, allowing organisations to acquire vital insights to inform their decision-making processes.

What Are The Reasons To Opt For AWS For Managing Big Data?

There are several reasons why business chooses AWS for Big Data. Some of them are:

Scalability: To handle the huge volumes of data required for big data analytics, AWS provides highly scalable storage and processing services. It enables you to rapidly scale resources up or down to match workloads.

Cost-effectiveness: AWS charges you solely for the resources that you utilise. It offers a pay-as-you-go concept that helps to cut expenditures. You can start small and develop as your needs change.

Compliance and security: To keep your data safe, AWS provides strong security protections and complies with severe criteria. Robust access restrictions, encryption, and audits all contribute to meeting security and compliance requirements.

Reliability: The global architecture of Amazon Web Services is designed to provide a highly stable and available environment for large data processing. With numerous Availability Zones, the chance of service disruption is reduced.

Machine learning and analytics: AWS provides a wide range of analytics and machine learning services for processing and analysing large amounts of data. AWS has you covered for everything from data lakes and warehouses to real-time streaming and machine learning.

Open source friendly: Many big data tools and technologies are free and open source. AWS works well with open source big data tools such as Apache Hadoop, Spark, Hive, and Presto. This simplifies the deployment of your chosen open source solutions on AWS.

AWS's scalability, cost effectiveness, security, stability, analytical capabilities, and open source friendliness make it a perfect platform for managing big data. The advantages of using AWS to realise the potential of big data are enormous.

How did Big Basket use AWS for Big Data: Case Study

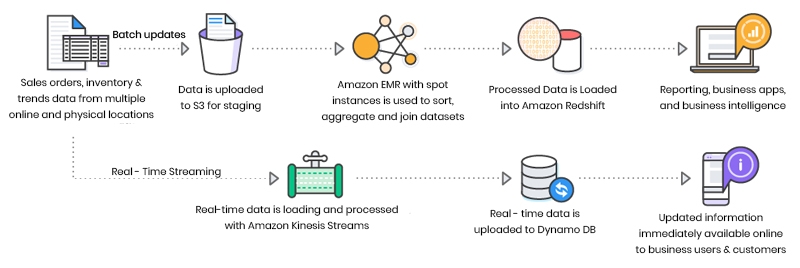

BigBasket, India's largest online grocery shop utilises AWS to manage its Big Data requirements. BigBasket was able to scale their Big Data infrastructure cost-effectively and efficiently by utilising AWS. BigBasket used Amazon S3 for data storage, Amazon Redshift for data warehousing, and Amazon EMR for data processing.

Big Basket collects client data via their website and mobile app, which includes:

- Browsing and purchase history

- Location

- Demographic information

They store this data in Amazon S3 buckets and use AWS Kinesis Data Firehose to ingest real-time streaming data.

AWS Lambda and Amazon Kinesis were also used for real-time data streaming and processing. BigBasket was able to analyse their data fast and efficiently using these technologies, generating significant insights into their client behaviour and purchasing trends. As a result, they were able to optimise their services and improve their customer experience.

Big Basket then examines the data for trends:

- Amazon Redshift is used to conduct complicated queries on massive datasets.

- Amazon EMR for processing and analysing large amounts of data

- Amazon QuickSight for visualising data and sharing reports

Big Basket can use these technologies to analyse shopping cart analytics, recurring consumers, seasonal purchase tendencies, and more.

Increasing Customer Satisfaction

Big Basket improves their service with actionable insights. They:

- Personalized product recommendations based on previous purchases;

- Optimise website style and product placement; and

- Identify potential locations for additional warehouses and delivery hubs.

- Develop targeted promos and offers for various client segments.

BigBasket was also able to handle the increasing volume of data created by their online grocery business because to the scalability of AWS services. They were also able to save operational costs by adopting AWS services because they just paid for the resources they used. Overall, BigBasket was able to leverage AWS for their Big Data needs, allowing them to efficiently handle and analyse enormous volumes of data while extending their infrastructure at a low cost.

What Is AWS Big Data Certification And What Are The Benefits?

An AWS Big Data Certification validates your knowledge of AWS Big Data services. It demonstrates to potential employers that you are capable of managing and analysing massive datasets using AWS' cutting-edge tools and services.

The AWS Certified Big Data - Specialty certification is intended for those who have a background in data analytics and have used AWS services for big data. Obtaining this certification validates your ability to design and implement AWS big data solutions.

The following are some of the advantages of being AWS Big Data Certified:

- • Validate your AWS big data abilities and experience: This certification validates your ability to build and deploy AWS big data solutions.

- • Advance your professional career: This certification can help you get additional job chances and potentially earn more money.

- • Stay current on AWS big data offerings: Preparing for this exam will improve your understanding of the most recent AWS technologies and best practises for extracting insights from data.

- • Become a member of an exclusive club: There are only about 6,000 AWS Certified Big Data - Specialty professionals worldwide right now. Obtaining this qualification places you in an elite group.

This certification covers several aspects of big data, such as architecture, security, storage, processing, and analysis. To obtain this certification, you must first pass the AWS Certified Big Data - Specialty exam, which consists of multiple choice and multiple response questions on essential concepts and use cases. You can pass this exam and become AWS Big Data Certified if you prepare properly.

What Are The Career Opportunities For AWS Big Data Certification?

There are numerous exciting career prospects available due to the growing need for big data and AWS certified individuals. You can try out for the following roles:

- • Big Data Engineer: Create, construct, and maintain AWS big data solutions. AWS Big Data Certification is required.

- • Data Scientist: Analyse data and obtain insights using big data tools and approaches. AWS certification is advantageous.

- • Solutions Architect: Create and implement AWS-based scalable, highly available, and fault-tolerant solutions. Certification as an AWS Certified Solutions Architect is preferred for good salary package.

- • Database Administrator: Install, configure, and maintain AWS databases. The AWS Certification validates key abilities.

- • Consultant: Assist organisations with the implementation of AWS big data solutions. Credentials for AWS Certification are highly regarded.

Those with the knowledge and skills to unlock big data on AWS have excellent job prospects. Obtaining AWS Big Data Certification might lead to additional career opportunities and greater compensation. Prepare to seize the possibilities that present themselves.

Conclusion

AWS Big Data is a significant resource for businesses trying to maximise the value of their data. AWS provides everything you need to begin your journey into the world of Big Data, including a wide choice of services, comprehensive information, and the option to obtain an AWS Big Data Certification.

You can now put up powerful clusters, store large amounts of data, analyse it with machine learning, and visualise your findings. AWS makes big data available to businesses and individuals of all sizes; all you have to do is dig in and get your hands dirty. The services we looked at are only the tip of the iceberg; AWS has a plethora of solutions to help you scale up your big data operations. Continue to study, build, and uncover the insights hidden in your data. The insights are there; you just need to know where to look for them. Embrace the power of AWS Big Data today by enrolling to AWS nig data training course to maximise the value of your data.

AWS Solution Architect Training and Certification

- Personalized Free Consultation

- Access to Our Learning Management System

- Access to Our Course Curriculum

- Be a Part of Our Free Demo Class

FAQs

Q1. Describe Amazon EMR.

Ans: For data processing, interactive analysis, and machine learning using open source frameworks like Apache Spark, Apache Hive, and Presto, the market-leading cloud big data platform is Amazon EMR.

Q2. Why should I to utilise Amazon EMR?

Ans: You can focus on data transformation and analysis without worrying about controlling computational resources or open-source software thanks to Amazon EMR, which also saves you money.

Q3. Describe EC2.

Ans: EC2, a virtual machine that was introduced in 2006, allows you to deploy your own servers in the cloud while maintaining OS-level management. It aids in giving you command over the hardware and upgrades, much like with on-premise servers. Both Linux and Microsoft's operating systems are compatible with EC2.

Q4. Describe the redshift.

Ans: Redshift is an Amazon data warehouse product that offers quick and potent services; it is a totally controllable petabyte-scale warehouse.

Q5. What distinguishes scalability from elasticity?

Ans: A system's capacity to scale up its hardware needs or processing nodes to meet rising demand is referred to as scalability.

The ability of a system to add resources to enhance performance when necessary and to revert to its initial configuration when resources are not needed is referred to as its elasticity.

AWS Course

Upcoming Batches

Trending Courses

Cyber Security

- Introduction to cybersecurity

- Cryptography and Secure Communication

- Cloud Computing Architectural Framework

- Security Architectures and Models

Upcoming Class

-0 day 19 Sep 2025

QA

- Introduction and Software Testing

- Software Test Life Cycle

- Automation Testing and API Testing

- Selenium framework development using Testing

Upcoming Class

3 days 22 Sep 2025

Salesforce

- Salesforce Configuration Introduction

- Security & Automation Process

- Sales & Service Cloud

- Apex Programming, SOQL & SOSL

Upcoming Class

-0 day 19 Sep 2025

Business Analyst

- BA & Stakeholders Overview

- BPMN, Requirement Elicitation

- BA Tools & Design Documents

- Enterprise Analysis, Agile & Scrum

Upcoming Class

-0 day 19 Sep 2025

MS SQL Server

- Introduction & Database Query

- Programming, Indexes & System Functions

- SSIS Package Development Procedures

- SSRS Report Design

Upcoming Class

-0 day 19 Sep 2025

Data Science

- Data Science Introduction

- Hadoop and Spark Overview

- Python & Intro to R Programming

- Machine Learning

Upcoming Class

7 days 26 Sep 2025

DevOps

- Intro to DevOps

- GIT and Maven

- Jenkins & Ansible

- Docker and Cloud Computing

Upcoming Class

6 days 25 Sep 2025

Hadoop

- Architecture, HDFS & MapReduce

- Unix Shell & Apache Pig Installation

- HIVE Installation & User-Defined Functions

- SQOOP & Hbase Installation

Upcoming Class

7 days 26 Sep 2025

Python

- Features of Python

- Python Editors and IDEs

- Data types and Variables

- Python File Operation

Upcoming Class

1 day 20 Sep 2025

Artificial Intelligence

- Components of AI

- Categories of Machine Learning

- Recurrent Neural Networks

- Recurrent Neural Networks

Upcoming Class

15 days 04 Oct 2025

Machine Learning

- Introduction to Machine Learning & Python

- Machine Learning: Supervised Learning

- Machine Learning: Unsupervised Learning

Upcoming Class

28 days 17 Oct 2025

Tableau

- Introduction to Tableau Desktop

- Data Transformation Methods

- Configuring tableau server

- Integration with R & Hadoop

Upcoming Class

7 days 26 Sep 2025