26

SepGrab Deal : Upto 30% off on live classes + 2 free self-paced courses - SCHEDULE CALL

In a statistical setting, probabilistic model-based clustering can be beneficial for arranging the data. The foundation of probabilistic model based clustering in data mining is finite combinations of multivariate models. This fundamental technology, based on finite mixtures of sequential models, is essential for quickly clustering sequential data. In other words, clustering is a technique for unsupervised learning in which we extract references from datasets that only contain input data and no identified outcomes.

Clustering is the process of dividing a population or collection of data elements into groups so that data points in the same group are more comparable to other data points in the same group and different from data points in other groups. Understanding probabilistic model based clustering in data mining begins with understanding data science; you can get an insight into the same through our Data Science training.

A statistical technique for data clustering is model based clustering. The observed data, also known as multivariate data, is thought to be the result of a finite combination of component models. Each component model is a probability distribution, usually a multivariate parametric distribution. This technique is an effort to improve the match between given data and a mathematical model. Additionally, it is based on the assumption that data are created by combining a simple probability distribution.

Several developing data mining applications need complex data clusterings, such as high-dimensional sparse text documents and continuous or discontinuous time sequences. Many of these applications have shown promising outcomes using model-based clustering approaches. model-based clustering is a natural choice for very high-dimensional vector and non-vector data when it is hard to extract essential features or determine a suitable measure of similarity between pairs of data objects.

Model-based clustering in data mining can be classified into the following types:

Statistical Approach:

A typical repetitive refining algorithm is expectation optimization. An improvement on k-means-

The central concept is as follows:

Machine Learning Approach:

Machine learning is a technique that creates complicated algorithms for massive data processing and provides results to its consumers. It employs sophisticated computers that can learn from experience and make predictions. The algorithms enhance themselves through frequent input of training data items. The primary goal of machine learning is to learn from data and develop models from it that people can understand and use. It is a well-known continuous conceptual learning approach that results in a clustering algorithm as a classification tree. Each node describes a concept and includes its probabilistic representation.

Restrictions

Neural Network Approach

The neural network technique portrays each cluster as an example, acting as a model for the collection. The new items are distributed to the group with the most similar examples based on some distance measure.

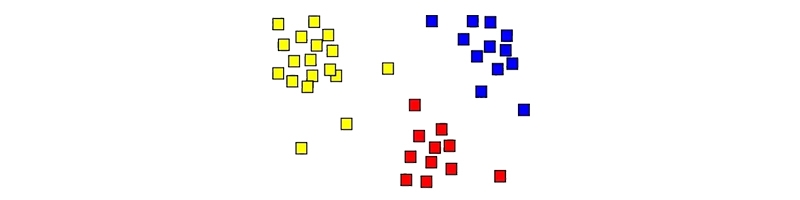

Model based clustering is a technique for discovering organizations in unlabeled data. It is the most prevalent type of unsupervised learning. Given an unknown dataset, a clustering algorithm can locate groupings of objects where the average distances between members of each cluster are closer than to members of other groups, as seen below:

This is a simple, two-dimensional example. Clusters are typically higher dimensional.

Clustering offers a wide range of practical uses. It is used in marketing, for example, to estimate consumer demographics. Knowing more about different market categories allows you to target consumers more precisely with advertisements.

Model based clustering can aid in the application of cluster analysis by requiring the analyst to formulate the probabilistic model used to fit the data, making the targets and cluster shapes intended to be more explicit than is typically the case when heuristic clustering algorithms are utilized.

Model-based clustering can be utilized for a variety of reasons.

It can be used as an exploratory tool to find structure in multivariate data sets, with the results allowing for data summarization and representation in a simplified and reduced form.

It can do vector quantization and data compression using appropriate prototypes and prototype assignments.

It indicates a latent group structure associated with unobserved heterogeneity. You can also learn the six stages of data science processing to better grasp the above topic.

Probabilistic model-based clustering is an excellent approach to understanding the trends that may be inferred from data and making future forecasts. The relevance of model based clustering, one of the first subjects taught in data science, cannot be overstated. These models serve as the foundation for machine learning models to comprehend popular trends and their behavior. You can also learn about neural network guides and python for data science if you are interested in further career prospects of data science.

The JanBask Training Team includes certified professionals and expert writers dedicated to helping learners navigate their career journeys in QA, Cybersecurity, Salesforce, and more. Each article is carefully researched and reviewed to ensure quality and relevance.

Cyber Security

QA

Salesforce

Business Analyst

MS SQL Server

Data Science

DevOps

Hadoop

Python

Artificial Intelligence

Machine Learning

Tableau

Interviews

Jorge Hall

The blog was super informative for me. However, Please write more Probabilistic model-based clustering.

JanbaskTraining

Glad, you enjoyed the blog.Thankyou for the feedback.

Beckham Allen

An extremely researched and nicely curated blog on model based clustering. Please write more about career choices, in the same area. Thankyou Janbask!

JanbaskTraining

Thank you for kind feedback.

Cayden Young

I enjoyed every bit of it and can't wait for more topics on the similar topic.

JanbaskTraining

Thank you for your comments.

Jaden Hernandez

Hi, Great article! I didn't know there are multiple things to know about these model based clustering. Thanks, team, waiting for more informative articles!!

JanbaskTraining

Thank you for your comment and for being a part of our community.

Emerson King

Thanks for this amazing post, provided almost all essential information that i was looking for.